AI MVP Development: A Step-by-Step Execution Guide and Best Tools

Last updated:27 February 2026

More than 80% of enterprises are expected to use generative AI in production by the end of 2026. Yet many AI initiatives still stall before they deliver measurable value. Budgets are approved, models are tested, and demos look impressive. But once exposed to real users, the results often fall short.

That’s where AI MVP development becomes critical. In our guide, we explain what an AI MVP actually is. You’ll see how it differs from a demo, prototype, or research experiment. You’ll also learn how to choose the right use case, define measurable success criteria, design a lean data layer, and select tools that reduce complexity instead of adding it.

And, most importantly, you’ll understand how to connect technical performance to real user outcomes.

Key takeaways

- An AI MVP must work in a real workflow with early users and real user data (not in a demo setup).

- Success means proven business impact and proven feasibility (reliability, latency, cost, compliance) for the AImodel.

- Keep scope tight: one use case, one primary flow, clear exclusions to keep the MVP development process (and the AI MVP development process) from drifting into overbuilding.

- Data readiness and traceable outputs matter more than fancy modeling, Machine Learning, or even pre-trained AI models early on. Pick the right AI tools for the job.

- Treat evaluation and monitoring as essentials in AI-powered MVP development (your AI-powered MVP), continuously collect user feedback, then decide to kill, pivot, or scale based on evidence.

What Is AI MVP Development?

AI MVP development is the process of building a minimum viable product that includes a clearly defined AI capability and releasing it to real users to validate both business value and technical feasibility.

The product must work in a real environment. It can be narrow in scope, but the AI component needs to operate within an actual workflow, using accurate data and producing outputs that influence user decisions.

The objective is to reduce uncertainty. An AI MVP tests whether the solution solves a meaningful problem and whether it can be delivered reliably with the available data, models, infrastructure, and constraints.

How an AI MVP differs from a demo, prototype, or model experiment

Teams often use these terms interchangeably, but they serve different purposes.

A demo presents a controlled scenario. Outputs are often predefined, and edge cases are hidden. It illustrates an idea but does not validate behavior in production conditions.

A prototype focuses on interaction design. It helps test flows, usability, and assumptions about user behavior. The AI outputs may be simulated, and performance is rarely evaluated under real-world constraints.

A model experiment lives in a research environment. It measures accuracy, precision, recall, or other technical metrics. This stage helps assess algorithm quality but does not confirm that the model creates user value once embedded in a product.

An AI MVP connects model performance to user outcomes. It integrates the model into a usable product, exposes it to real workflows, and measures adoption, task completion, reliability, and operational impact. That connection between technical performance and business value defines the MVP.

What an AI MVP must prove

An AI MVP must prove value and feasibility.

Value means users adopt the AI capability, and it improves a measurable outcome, such as:

- reduced manual effort;

- faster resolution;

- better decisions.

Feasibility means the system runs reliably with real data, acceptable latency and cost, and required privacy and compliance controls.

Clear success criteria are essential in MVP development for AI, since model performance alone does not confirm product impact.

What an AI MVP should intentionally exclude

Keep the scope tight to avoid drifting into a full product build. Focus on one core problem and one primary workflow. Delay secondary use cases, broad integrations, deep personalization, and heavy optimization until you have evidence from real usage. Assisted workflows are often enough for early validation.

Why AI MVPs can be built faster today

AI development services and timelines have shortened due to access to pretrained foundation models, managed cloud services, and modern MLOps practices. Teams no longer need to train models from scratch for many use cases. Integration, evaluation, and deployment can happen in parallel instead of sequential phases.

In many cases, a focused AI MVP can be delivered in four to six weeks rather than the traditional six months or more. The advantage lies in earlier validation. Releasing essential AI-driven capabilities and observing user interaction provides concrete data that guides the next iteration.

Pretrained models, managed ML services, and modern MLOps practices reduce upfront effort. For many teams, this shortens timelines to 4-6 weeks instead of 6+ months, because you can release essential capabilities, observe real user behavior, and iterate based on evidence.

What Business Problem Should Your AI MVP Solve First?

Effective AI integration focuses on solving a specific, high-impact problem rather than adding AI for novelty. The question is simple: where does intelligence meaningfully improve an existing process as users interact with a basic featureai product?

High-impact, contained use cases

Some use cases consistently fit MVP constraints because they address clear pain points and rely on structured workflows:

- support assistance (ticket triage, response drafting using natural language processing);

- search and knowledge retrieval across existing data assets;

- content drafting with predefined templates;

- workflow automation for repetitive internal tasks tied to business logic.

These areas share three traits: measurable outcomes, accessible data, and limited operational risk. They also let a development team ship a basic version using existing tools, without creating long-term technical debt from day one.

Jasper, for example, launched in about 30 days with a small set of GPT-3-powered templates. Early versions of ChatGPT and Midjourney also started with focused capabilities, reached users quickly, and improved through iteration. The scope was constrained. The feedback loop was short, driven by user testing.

Defining the job to be done

Before selecting a use case, define the “job to be done” in operational terms. Avoid broad goals such as “improve productivity.” Instead, frame the problem around a concrete action:

- Reduce average support response time.

- Help sales teams draft personalized outreach faster.

- Enable users to find relevant documents in seconds.

In our practice, we tie the job to measurable success metrics. These may include task completion time, adoption rate, error reduction, or engagement depth. AI analytics can help track usage patterns and identify churn risks early, providing evidence for the next iteration.

In the development of an AI MVP, clarity at this stage prevents overbuilding, wrong tools' decisions, and misaligned experimentation that later slows down the final product.

Learn how we built an AI-powered recruitment assistant using OpenAI stack

Apply a feasibility filter

Some ideas appear attractive but collapse under practical constraints. A simple feasibility check helps filter them out.

Data access and quality

Do you have reliable, structured, and legally usable data assets? Building custom models from scratch often requires heavy data science investment and adds risk for an MVP.

Latency requirements

Does the workflow tolerate model response times? Real-time systems impose stricter constraints than background automation.

Risk and compliance level

Does the feature operate in a regulated domain? AI implementations must align with privacy regulations such as GDPR or CCPA from the beginning.

Operational complexity

Can the feature be delivered without large-scale infrastructure changes or deep system integrations? If the answer to several of these questions is unclear, the use case may be too ambitious for the first release. It likely accumulates avoidable technical debt before the basic version proves value.

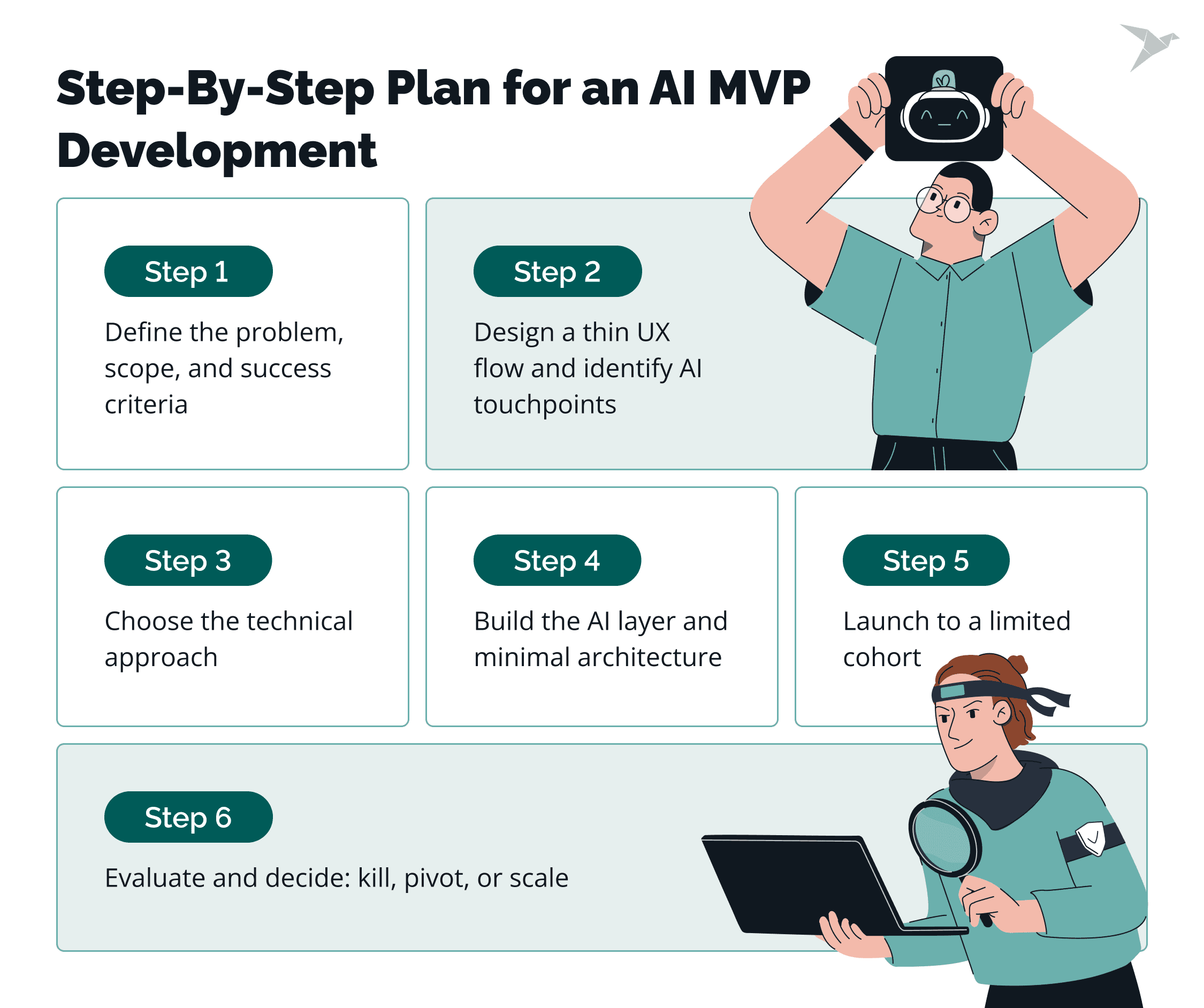

What Is the Fastest Step-By-Step Plan For An AI MVP Development?

A fast AI MVP execution plan moves from problem definition to real-user validation in controlled steps. Each phase should end with clear artifacts that make the next decision objective.

This AI MVP development guide outlines a practical sequence from discovery to launch.

Step 1: Define the problem, scope, and success criteria

We always start with one clearly defined use case. Describe the job to be done, the target user, and the workflow where AI will intervene.

Deliverables at this stage:

- one-page problem statement;

- defined scope (what is included and excluded);

- constraints (data access, compliance, latency, budget);

- acceptance criteria and measurable success metrics.

Avoid expanding into multiple AI features. One use case is enough for the first release.

Step 2: Design a thin UX flow and identify AI touchpoints

Here, we map the minimal user journey required to test the hypothesis. The interface can be simple, but it must support real interaction with the AI component. This is one of the AI MVP development best practices.

Deliverables at this stage:

- basic user flow diagram;

- wireframes or lightweight UI mockups;

- defined AI input and output points;

- error and fallback handling logic.

At this stage, you can use “Wizard of Oz” testing if needed. Human input simulates AI responses to validate user behavior before full implementation.

Step 3: Choose the technical approach

At this stage, we select the simplest approach that satisfies the hypothesis. Options may include prompting with a foundation model, retrieval-augmented generation (RAG), limited fine-tuning, or agent-based orchestration.

Deliverables at this stage:

- documented architecture decision;

- tooling and framework selection;

- data sourcing plan (only what is required for the MVP);

- evaluation criteria for model performance.

Collect only the data necessary to test the use case. Large-scale data pipelines are rarely required at this stage.

Step 4: Build the AI layer and minimal architecture

Here, we implement the AI capability and integrate it into a usable product flow. The goal is functional validation, not optimization.

Deliverables at this stage:

- working AI integration;

- basic infrastructure setup;

- logging and monitoring configuration;

- internal testing results.

Automated testing tools can accelerate validation and reduce manual QA effort. The system should handle real inputs, even if performance tuning is minimal.

Step 5: Launch to a limited cohort

In most cases, best practice is to release the MVP to a controlled group of users. Exposure to real workflows is required to validate assumptions.

Deliverables at this stage:

- deployed MVP environment;

- defined user cohort;

- analytics setup for tracking usage and outcomes;

- feedback collection mechanism (qualitative and quantitative).

This stage measures adoption, reliability, and outcome improvement under real conditions.

Step 6: Evaluate and decide: kill, pivot, or scale

At the end, we review results against the predefined success criteria. Separate model metrics from business outcomes.

Deliverables at this stage:

- performance report (technical and product metrics);

- user feedback summary;

- cost and infrastructure assessment;

- clear decision: stop, adjust direction, or prepare for scale.

If the MVP meets its targets, planning for scale includes strengthening infrastructure, expanding features, and refining compliance controls. If not, use evidence to adjust the hypothesis or discontinue the initiative.

A fast AI MVP plan is structured, measurable, and constrained. Each step reduces uncertainty and produces artifacts that support the next decision.

What Are Some Common Mistakes And Challenges When Building An AI MVP?

AI MVPs often fail for predictable reasons. The issues rarely relate to model intelligence alone. Most breakdowns happen around data readiness, reliability under real conditions, weak validation, or premature engineering decisions.

Understanding these patterns early reduces wasted time and budget.

Weak data readiness

Data issues are the most common cause of delays. Teams underestimate how fragmented, inconsistent, or incomplete their data is. Logs may exist, but labels are missing. Historical records may be stored, but formats differ. In some cases, the data needed to test the hypothesis is not accessible due to privacy or contractual limits.

Even when data exists, it may not reflect real-world variability. Models trained on narrow datasets perform well in testing and fail once exposed to broader user behavior.

Before building the AI layer, confirm:

- The required data is accessible and legally usable.

- It represents real production scenarios.

- It is clean enough to support consistent outputs.

Without this foundation, model iteration becomes guesswork.

Overengineering too early

Early AI projects often expand in scope before validation. Teams add features, build scalable infrastructure, or fine-tune complex models before proving that the core use case delivers value. This increases AI development cost and delays exposure to real users.

An MVP does not require full automation, deep personalization, or multi-system integrations. It requires a working hypothesis tested in a constrained workflow. Premature optimization consumes resources without reducing uncertainty.

Model unreliability in real workflows

AI systems behave differently outside controlled testing. Common issues include hallucinations, inconsistent outputs, failure on edge cases, and difficulty explaining results. When outputs appear unpredictable, user trust declines quickly, even if the product idea looked strong on paper.

Reliability must be evaluated in context:

- Does the model behave consistently under varied inputs, including messy or partially structured data?

- Are failure cases visible and manageable?

- Is there a fallback mechanism when confidence is low to protect user satisfaction?

Unclear outputs in an early usable version can damage credibility before you prove demand for even a single feature.

Undefined success metrics

Some teams launch AI features without defining what “good” means.

Technical metrics such as accuracy scores do not automatically translate into user value. Without predefined product metrics, such as task completion time, adoption rate, reduction in manual effort, or direct feedback signals, evaluation becomes subjective.

A well-defined problem and measurable outcome should exist before development begins. Clear metrics help save money, reduce wasted cycles, and highlight real competitive advantages instead of surface-level improvements.

Skipping real user validation

Internal testing is not a substitute for real usage. AI systems operate in unpredictable environments. If early releases are not exposed to real users, assumptions remain untested.

Even a clickable prototype can help prove demand and collect early signals. Lightweight feedback tools, structured surveys, and usage analytics provide direct feedback on clarity, usefulness, and friction points.

Releasing to a limited cohort allows you to test a single feature, validate data quality, and refine the experience before broader rollout. This step protects both credibility and budget.

Underestimating legal and compliance risks

In regulated industries, AI features must align with privacy laws and internal governance policies. GDPR, CCPA, and sector-specific regulations can restrict how data is processed and stored.

Compliance requirements should shape architecture decisions from the start. Retrofitting controls later increases cost, slows progress, and may require reworking core components of the usable version.

Learn how we built a video-first hiring platform enhanced by AI tools

How Do You Design The Data And Knowledge Layer For An AI MVP?

The data and knowledge layer should support one capability of AI with minimal complexity. At the MVP stage, the goal is consistent retrieval and traceable outputs, not full-scale data infrastructure, whether you’re using Google Cloud AI services, no-code platforms, or writing code as part of your AI development.

Select only the data you truly need

Start with the smallest set of sources that directly support the use case and its core value. These might include product documentation, support articles, or internal records tied to the workflow you are improving. Confirm data permissions and privacy constraints early as part of idea validation. If usage rights or compliance requirements are unclear, resolve them before ingestion.

Relevance matters more than volume. Expanding sources too early increases noise and weakens retrieval quality, which makes it harder to prove real value.

Keep document processing lightweight

Convert documents into clean, structured text and remove obvious noise. Chunk content along natural boundaries such as sections or paragraphs so each piece preserves meaning. Extremely large chunks reduce precision. Very small chunks lose context.

Attach basic metadata such as source name and update date. This makes it easier to trace outputs and debug issues later, especially for a product manager validating core features and checking whether the answers match what features users expect.

Choose a practical retrieval approach

Match the retrieval method to the problem. Keyword search works when terminology is consistent and structured. Vector search performs better when users phrase queries differently from the original content. A hybrid approach can balance both patterns without introducing heavy ranking systems.

The MVP does not require advanced search tuning. It requires consistent and explainable retrieval: good enough for rapid prototyping, whether you’re testing with no-code tools or building directly on managed AI services.

Ground responses and make them traceable

Model outputs should be generated from retrieved content, not from general knowledge alone. Passing relevant chunks into the prompt and limiting responses to that context reduces drift.

Provide references or visible citations to the source material. This increases transparency and simplifies evaluation when answers are incorrect.

Validate retrieval before optimizing models

Test the system using a small set of realistic questions. Review whether the correct passages are consistently retrieved. If retrieval fails, improving prompts or model parameters will not solve the underlying issue.

A minimal, well-structured knowledge layer creates a stable base. Complexity can be added later, once the MVP proves value and the core features deliver real value in a real workflow.

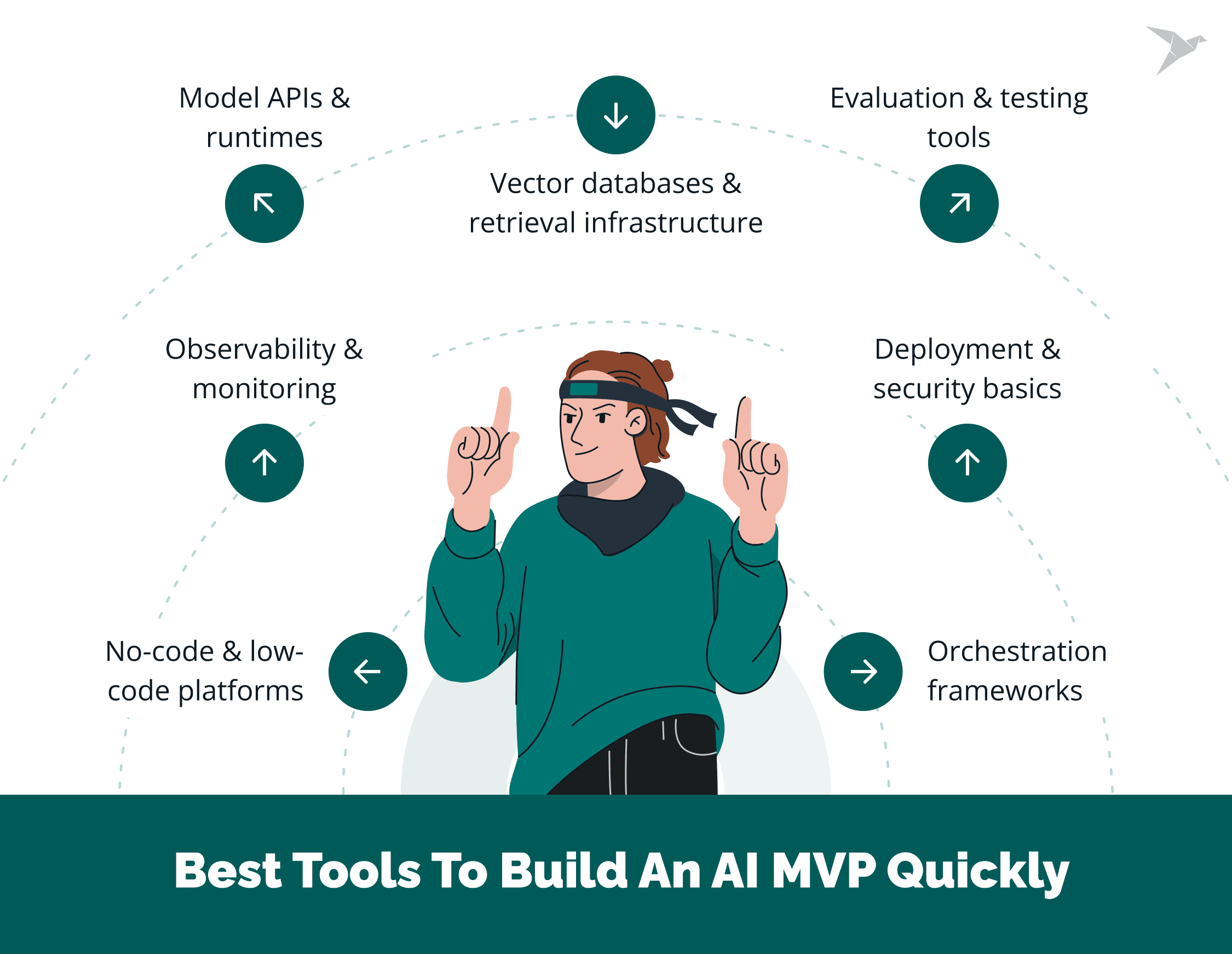

What Are The Best Tools To Build An AI MVP Quickly?

Tool choice affects speed more than model sophistication. For an MVP, prioritize integration ease, stable APIs, and operational maturity over flexibility. The goal is to ship, measure, and iterate.

Model APIs and runtimes

Use pretrained model APIs when you need production-ready language, vision, or embedding capabilities without training from scratch. Platforms such as OpenAI or Hugging Face provide managed access to foundation models, which removes infrastructure overhead and shortens setup time.

Use them when the problem can be solved with prompting, retrieval, or light customization. Avoid custom training unless your use case depends on proprietary data patterns that cannot be addressed with existing models. This approach aligns with current AI development trends, where teams build on top of mature ecosystems rather than starting from raw models.

Orchestration frameworks

Orchestration frameworks help structure prompts, manage tool calls, and connect retrieval with generation. They reduce glue code and make iteration easier.

Use them when your MVP involves multi-step reasoning, retrieval-augmented generation (RAG), or external tool access. If your workflow is simple (single prompt in, single response out), basic API integration may be enough. Choose frameworks with active maintenance and clear documentation to avoid hidden complexity.

Vector databases and retrieval infrastructure

Vector databases support semantic search by indexing embeddings. They are useful for knowledge assistants, document search, and internal copilots.

Use a managed vector database when your MVP requires contextual retrieval from unstructured documents. If terminology is structured and predictable, traditional or hybrid search may be sufficient. For speed, select hosted services that handle scaling and indexing without manual cluster management.

Evaluation and testing tools

AI systems require more than unit tests. Evaluation tools help measure response quality, detect regressions, and compare prompt or model variations.

Use them when outputs influence user decisions or business workflows. Even lightweight evaluation frameworks can track consistency and edge cases early. Automated testing platforms can also detect defects faster than manual review alone. Without structured evaluation, iteration becomes subjective.

Observability and monitoring

Logging prompts, responses, latency, and error rates is essential from the first release. Observability tools help track reliability and identify drift over time.

Use monitoring solutions when the MVP is exposed to real users. AI systems improve through feedback loops, and visibility into usage patterns supports both technical fixes and product decisions. Analytics tools can also help analyze engagement behavior and signal churn risk.

Deployment and security basics

Use managed cloud platforms for hosting APIs and lightweight backends. Container services or serverless platforms reduce operational overhead.

Apply basic security practices from day one: access controls, encrypted data storage, and audit logging. In regulated domains, confirm that the selected providers meet relevant compliance requirements. Avoid building custom infrastructure unless there is a clear constraint that managed services cannot address.

No-code and low-code platforms

No-code and low-code tools such as Bubble, Glide, or Adalo can accelerate interface development, especially for non-technical founders.

Use them when you need to test user flows quickly without investing in full frontend engineering. These tools integrate with model APIs and allow fast iteration of UI and logic. They are particularly useful in early validation stages before committing to long-term architecture.

The right stack depends on the use case, but the principle remains consistent: choose mature, well-supported MVP development AI tools that minimize setup time and operational risk. Tooling should reduce uncertainty and enable fast validation and not introduce new engineering challenges.

We are pleased to help

Final Thoughts: Where AI MVPs Are Heading

AI MVP development is a discipline of focus. It connects one clearly defined problem to a working AI, places it in front of users, and measures whether it delivers value under real constraints in an AI product with basic features.

A demo proves a concept. A prototype validates interaction. A model experiment measures algorithm quality. An AI MVP ties technical performance to user outcomes and operational reality, often starting with a simple landing page to attract early users and test intent before a deeper build-out.

The teams that move fastest follow the same pattern: narrow scope, clear success criteria, minimal architecture, early user exposure, and evidence-based decisions. They avoid heavy pipelines, premature scaling, and feature expansion before validation. They treat data readiness and retrieval quality as first-order concerns rather than afterthoughts.

What’s next?

In 2026, two signals are shaping how teams build and ship. Gartner forecasts worldwide AI spending will reach $2.52 trillion in 2026 (44% YoY), which means more competition and higher expectations for operational maturity. Gartner also expects more than 80% of enterprises will have used GenAI APIs/models or deployed GenAI-enabled apps in production by 2026, up from under 5% in 2023.

What these points point to next: faster MVP cycles will remain the safest path, but the bar will rise. Teams will increasingly default to foundation-model APIs plus retrieval, treat evaluation and observability as required MVP components, and build traceability (grounding and citations) earlier, especially where privacy or regulatory constraints apply.

FAQ

Most teams can build an AI MVP in 6 to 12 weeks. The biggest variables are how ready your data is, how quickly you can validate demand with real users, and how strict your requirements are around security and compliance.

Timelines usually stretch when teams don’t prioritize features early, or when they try to solve multiple use cases at once instead of shipping a focused first version.

A production-lean AI MVP needs a thin product layer, a reliable way to call the model, a small and well-prepared data layer, and enough observability to understand what happens in real workflows.

In practice, that means a simple app or API that users interact with, a secure deployment on cloud infrastructure, structured data that supports the single use case, and basic logging, monitoring, and access controls. The goal is not a perfect platform. It’s a usable version that behaves predictably enough to learn from and improve.

The best tools are the ones that let you move fast without locking you into the wrong tech stack. For many teams, that means starting with managed AI services instead of heavy custom work, using cloud infrastructure that matches your security needs, and picking tooling that helps you validate demand through usage analytics and qualitative data.

Strong feedback tools also matter early, because direct signals from users often reveal what needs to change faster than internal assumptions. Finally, choose with post-launch support in mind, since the real cost of an AI MVP often starts after release, when you need to monitor performance, fix edge cases, and refine the experience.

TechMagic Academy

TechMagic Academy